This is the story of how we built the Zodinet AI Agent Platform — a real-time, bilingual, avatar-driven conversation system — and how we can deliver the same thing for your brand, your museum, your lobby, your product, in weeks instead of quarters.

The Challenge

A client came to us with an idea that sounds simple when you say it out loud: put a realistic AI avatar in a physical space — a museum, a showroom, a lobby — and let visitors walk up and talk to it. In two languages. About a specific topic. With answers pulled from curated knowledge. In real time. Without hallucinating, wandering off topic, or going dark when credits run out.

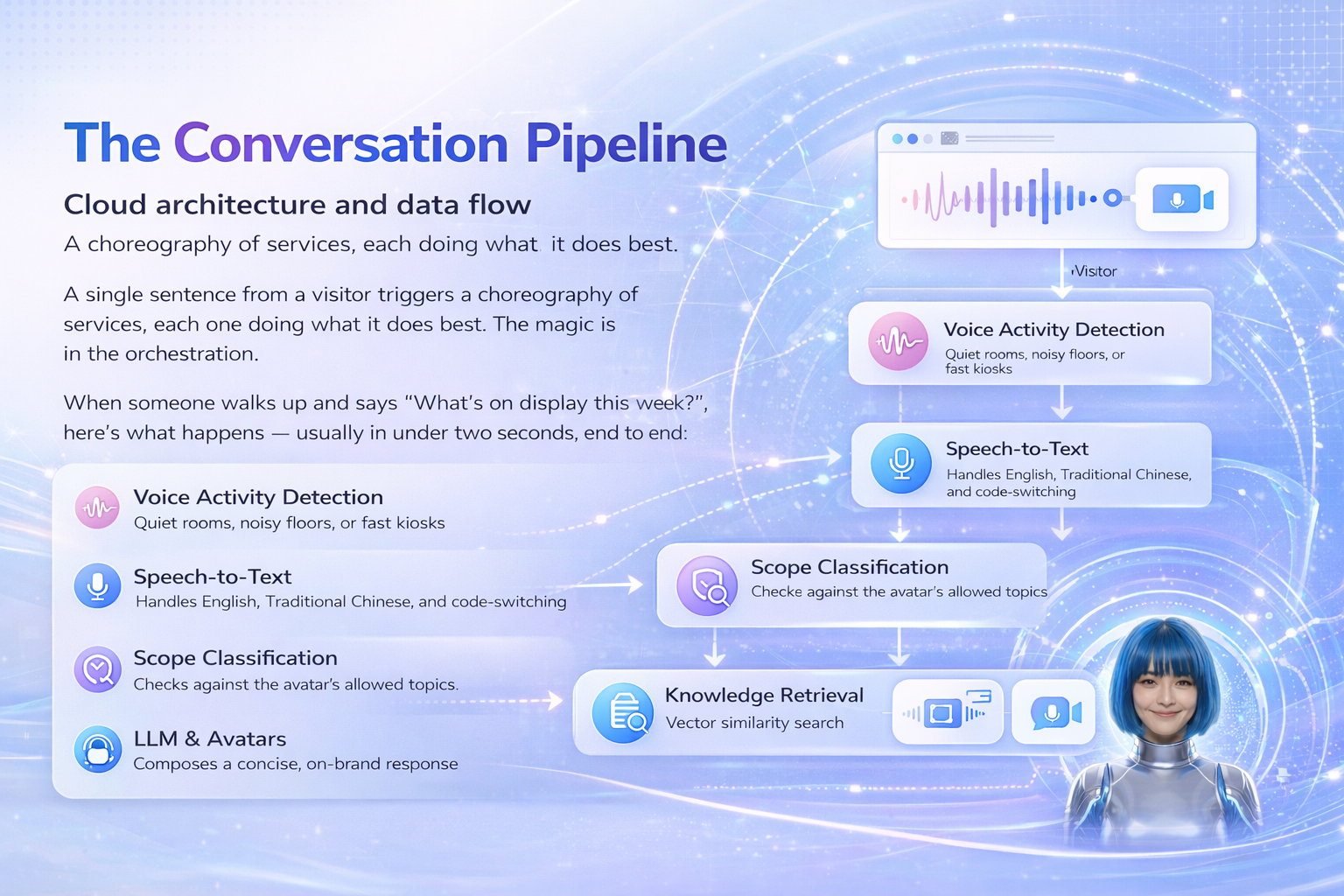

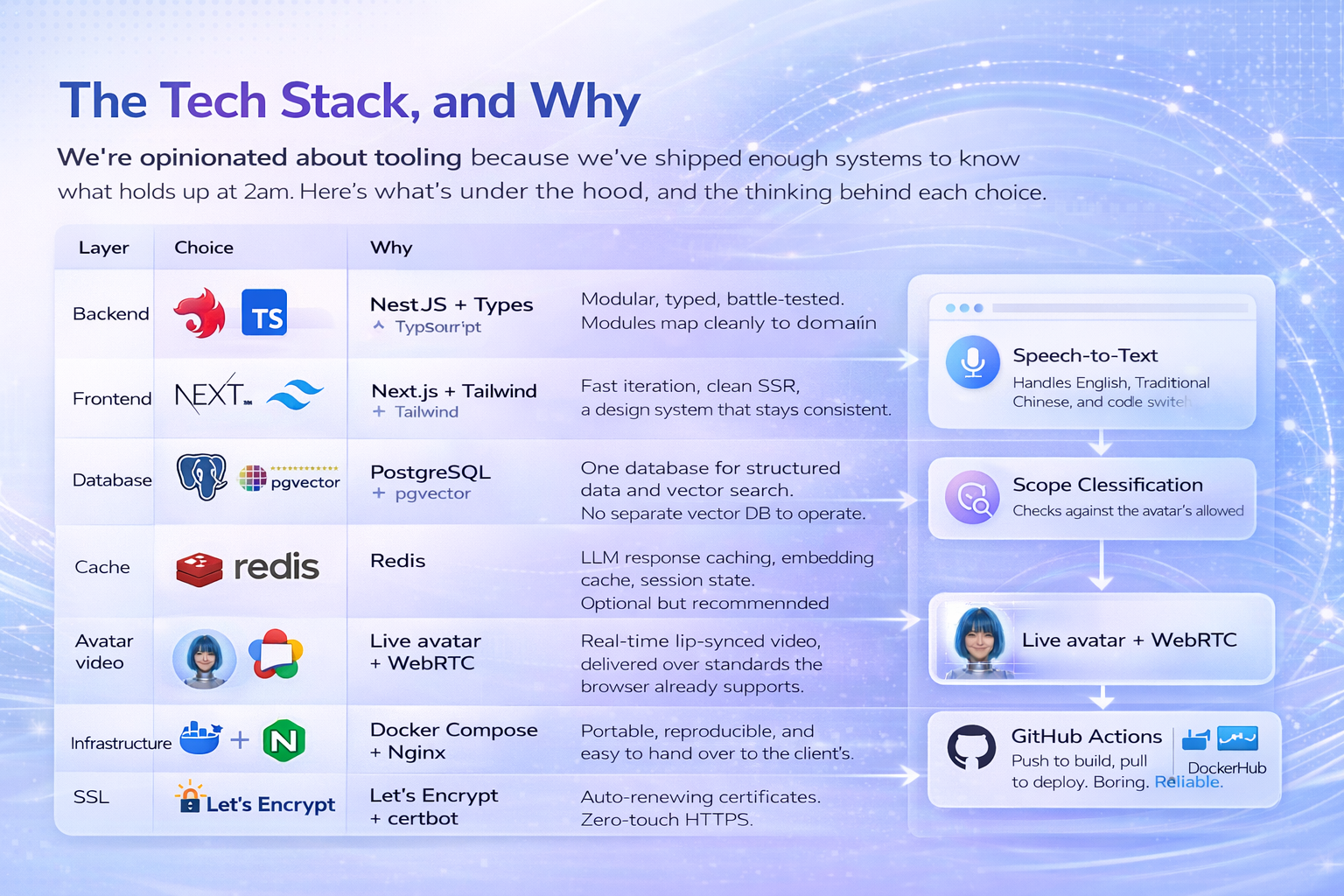

Everyone who’s built with LLMs knows the pain points hiding in that sentence. Latency. Language switching. Scope control. Voice-activity detection in noisy rooms. Avatar lip-sync. Cost overruns. Operating-hour rules. Offline messaging. Content governance. The list compounds fast.

What Makes It Ship-Ready

Plenty of teams can stitch an LLM to a TTS engine. Fewer can ship something an enterprise will trust to put on their front door. Here’s what we build in by default, because we’ve learned that leaving any of these to “phase two” is how projects die in phase one.

Cost governance, from day one

Every third-party call — avatar streaming minutes, language model tokens, speech hours, synthesis characters — is metered in the dashboard. Teams see, at a glance, how much today cost and how much of each provider’s budget is left. Cheap-and-fast models handle the default path; higher-quality models are the fallback. Responses and embeddings are cached, so the same question costs nothing the second time.

Operating hours, without downtime anxiety

A weekly schedule controls when voice interaction is active. Outside those hours, visitors see a polite offline message — in the right language. Operators can temporarily override the schedule for a demo or a VIP visit, and clear it afterwards. No code change. No deploy.

Scope control that actually works

We layer three mechanisms: an instant keyword block for hard-no topics, a keyword whitelist for obvious in-scope questions, and a semantic check against a natural-language description of the avatar’s purpose for everything in between. Out-of-scope replies are pre-written, bilingual, and never consume LLM tokens.

Knowledge ingestion, not prompt engineering

Clients don’t want to rewrite their documents as system prompts. They want to drag in a PDF and have it just work. We extract, chunk, embed, and index — with support for PDF, DOCX, TXT, and Markdown — and surface the whole library in the admin panel for review and curation.

Multiple Languages done properly

Not translation. Bilingual. Two voices per avatar, language detection per utterance, per-language offline messages, per-language out-of-scope replies, and a knowledge base that holds documents tagged. Visitors switch languages mid-sentence and the avatar keeps up.

What We Can Build for You

The Zodinet AI Agent Platform is a pattern, not a single product. The same foundation — real-time conversation, bilingual operation, scoped knowledge, admin-driven configuration, cost governance — adapts to many use cases. A few we’ve scoped or shipped:

Why Work With Zodinet

We’re a Vietnam-based software studio with a team of senior full-stack engineers who’ve shipped real products for clients across the U.S., Europe, and Asia. We don’t do slide decks that promise AI magic. We ship running systems.

What clients get when they work with us

- A working platform, not a prototype. Every project we deliver goes to production. Our deliverables are containers you can run, documentation your team can read, and a system your operators can actually use.

- Senior engineers on your problem. No bait-and-switch staffing. The people who scope your project are the people who build it.

- Transparent costs, for you and your users. We design for unit economics from day one — cheap models as default, caching by default, budget dashboards by default. You won’t be surprised by a cloud bill.

- We co-create, not hand off. Our engagement model is genuine partnership. We work alongside your team, train your operators, and leave behind documentation that doesn’t require us to maintain it.